📰 Trending Topics

Google News - Trending

6 Fantasy Football RBs Whose Touchdowns Are Trending Up & Down - 4for4 Fantasy Football

2026-06-09 13:17

Google News - Technology

I tried Siri AI, and so far it actually works - The Verge

2026-06-09 23:43

- I tried Siri AI, and so far it actually works The Verge

- Apple’s new Siri is a dark horse in the AI race The Economist

- Apple bets boring is better NBC News

- NVIDIA Confidential Computing to Help Expand Apple’s Private Cloud Compute NVIDIA Blog

- Introducing the Third Generation of Apple’s Foundation Models Apple Machine Learning Research

The Top New Features in Apple’s iOS 27 and iPadOS 27 - WIRED

2026-06-09 17:03

Google teams up with Paris Hilton to showcase Android and AI app building - 9to5Google

2026-06-09 17:40

- Google teams up with Paris Hilton to showcase Android and AI app building 9to5Google

- Paris Hilton is Android’s first icon in residence blog.google

- Gemini Canvas helped Paris Hilton turn an idea into an app, and she didn't write a line of code Android Authority

- Paris Hilton Builds Her Own Android App Using Google AI: No Code, Just Vibes Android Headlines

- Paris Hilton shows how Gemini turns ideas into real Android apps Sammy Fans

macOS 27 Finally Brings Direct Touch Control to Sidecar - MacRumors

2026-06-09 16:17

- macOS 27 Finally Brings Direct Touch Control to Sidecar MacRumors

- MacBook Ultra: 5 Features That Could Justify the Name MacRumors

- Omdia: OLED display demand for notebook PCs to reach $11.5 billion by 2033 Omdia

- Ahead of Apple MacBook Ultra: macOS 27 gets touchscreen support Notebookcheck

- Leaked: Apple’s M6 OLED MacBook Pro is the Redesign We’ve Been Waiting For Geeky Gadgets

NASA - Breaking News

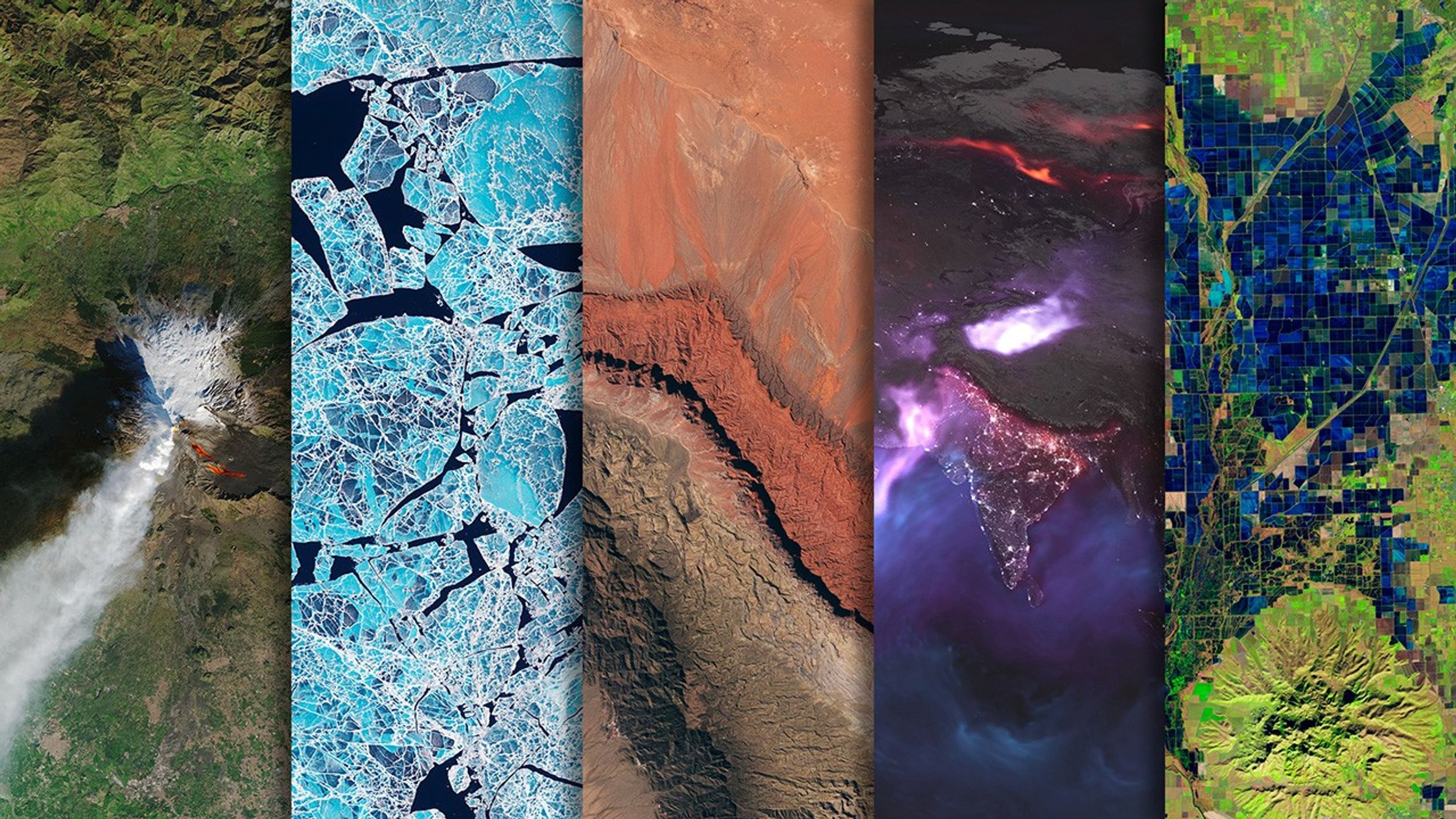

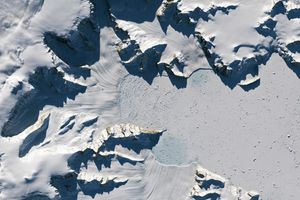

Tyndall’s Trail of Bergs

2026-06-10 04:01

The Southern Patagonian Icefield is the largest expanse of ice in the Southern Hemisphere outside of Antarctica. The mass of glacial ice extends hundreds of kilometers along the spine of the Andes, feeding dozens of dynamic outlet glaciers that grind their way down from higher elevations. Many of these rivers of ice terminate in the sea or in proglacial lakes.

An astronaut aboard the International Space Station photographed one of these glaciers—Tyndall Glacier in southern Chile—through a layer of ethereal clouds on May 10, 2026. Fragments of ice that had calved off its terminus were visible floating on Lago Geikie.

Like most Patagonian glaciers, Tyndall has been shrinking since the end of the Little Ice Age about 150 years ago. Lago Geikie formed at Tyndall’s terminus around 1940, according to glaciologist Mauri Pelto of Nichols College, and gradually expanded as the ice retreated. Part of the glacier previously terminated in Lago Tyndall to the east, but thinning ice cut off that outlet by 2010, Pelto said. (The ice’s retreat also exposed bedrock along its eastern edge that contains scores of ichthyosaur fossils.)

Along with thinning, ice calving off the glacier’s front has reduced its volume. Tyndall has lost 2.2 kilometers (1.4 miles) in length since November 2022, Pelto said, following about a decade of limited retreat with considerable thinning. A significant calving event in March and April 2023 contributed to the recent uptick in ice retreat. During that time, satellites observed several large icebergs breaking away from Tyndall’s terminus.

Austral autumn in 2026 was a time of active calving retreat at Tyndall (and some neighboring glaciers), Pelto said, albeit more incremental than three years prior. “The substantial crevasses crisscrossing the glacier near the calving front lead to many smaller icebergs,” he said. On the other hand, larger tabular icebergs tend to form when there are fewer deep crevasses near the terminus and the glacier’s ice is thinner.

The ice cliff at the terminus casts a substantial shadow, which can help scientists estimate the height of the glacier’s front. Pelto’s calculations, using information about the Sun’s position provided with the image, indicate that Tyndall’s front loomed 30–40 meters (100–130 feet) above the lake surface in May 2026. Observations from orbit, including astronaut photographs, can help scientists monitor and understand glaciers in remote regions where ground-based observations are scarce.

As for what comes next for Tyndall, Pelto expects many more small icebergs to continue breaking off, given the heavily crevassed appearance of the calving front. “Look for a burst of iceberg production next fall.”

Astronaut photograph ISS074-E-582898 was acquired on May 10, 2026, with a Nikon Z9 digital camera using a focal length of 560 millimeters. It is provided by the ISS Crew Earth Observations Facility and the Earth Science and Remote Sensing Unit at NASA Johnson Space Center. The image was taken by a member of the Expedition 74 crew. The image has been cropped and enhanced to improve contrast, and lens artifacts have been removed. The International Space Station Program supports the laboratory as part of the ISS National Lab to help astronauts take pictures of Earth that will be of the greatest value to scientists and the public, and to make those images freely available on the Internet. Additional images taken by astronauts and cosmonauts can be viewed at the NASA/JSC Gateway to Astronaut Photography of Earth. Story by Lindsey Doermann.

References & Resources

- AntarcticGlaciers.org (2020, June 22) The Patagonian Icefields today. Accessed June 9, 2026.

- From a Glaciers Perspective (2026, February 28) Glaciar Mayo, Argentina Terminus Collapsing in 2026: A Familiar Pattern. Accessed June 9, 2026.

- From a Glaciers Perspective (2023, April 18) Tyndall Glacier, Chile April 2023 Calving Retreat. Accessed June 9, 2026.

- Minowa M., et al. (2023) Effects of topography on dynamics and mass loss of lake-terminating glaciers in southern Patagonia. Journal of Glaciology, 69(278), 1580-1597.

- NASA Earth Observatory (2017, July 14) Ice on the Move in Patagonia. Accessed June 9, 2026.

- NASA Earth Observatory (2007, December 24) Tyndall Glacier, Chile. Accessed June 9, 2026.

You may also be interested in:

Stay up-to-date with the latest content from NASA as we explore the universe and discover more about our home planet.

Scientists relied on satellite data to understand how the Antarctic glacier lost so much ice so rapidly.

The glacier in southeastern Svalbard pulses with the changing seasons, speeding up and slowing its flow toward the sea.

During the 2022 summer melt season, sediment plumes and fractured sea ice traced swirling eddies in a branch of the…

Flight Dynamics Research Facility Characteristics

2026-06-09 20:47

1 min read

Preparations for Next Moonwalk Simulations Underway (and Underwater)

Home

Characteristics

The Flight Dynamics Research Facility (FDRF) is a large, subsonic wind tunnel with a vertical test section for conducting flight dynamics research for stability, controllability, free-fall and aircraft spin, and spin recovery testing of atmospheric vehicles.

Characteristics

- Test Section Dimensions: 20 ft. diam. by 24 ft. high

- Speed: 0 – 172 ft/s (0 – 117 mph)

- Dynamic Pressure: (0 – 35 psf)

- Reynolds Number: 0 – 1.10×10^6 per ft.

- Pressure: Atmospheric

- Temperature: Actively cooled (79° F)

- Test Gas: Air

- Facility Height: 131 ft.

Flight Dynamics Flight Research

Aerosciences Evaluation and Test Capabilities

Share

Related Terms

Artemis III Crew Announced

2026-06-09 19:32

NASA astronaut Andre Douglas, ESA (European Space Agency) astronaut Luca Parmitano, and NASA astronauts Randy Bresnik and Frank Rubio take a photo together on June 9, 2026. The four were announced as the Artemis III crew.

NASA’s Artemis III mission in low Earth orbit will test integrated operations between the Orion spacecraft and one or both commercial landers from SpaceX and Blue Origin respectively.

Learn more about the next Artemis mission and the crew.

Image credit: NASA/Robert Markowitz

La NASA avanza hacia la misión Artemis III en 2027 y anuncia a su tripulación

2026-06-09 16:19

Read this release in English here.

La NASA dio el martes otro paso hacia una de las misiones tripuladas más complejas de la historia reciente al ofrecer nuevos detalles sobre Artemis III y anunciar a los cuatro miembros principales de la tripulación y a un suplente para este vuelo de prueba. En 2027, la misión llevará a cabo una serie de exigentes pruebas cerca de la Tierra que son esenciales para Artemis IV, la primera misión tripulada al Polo Sur lunar, prevista para 2028.

En la misión Artemis III, el cohete SLS (por las siglas en inglés de Sistema de Lanzamiento Espacial) de la agencia lanzará la nave espacial Orion y a su tripulación desde el Centro Espacial Kennedy de la NASA, en Florida, a la órbita terrestre baja. Tras las verificaciones de los sistemas de Orion, la nave espacial demostrará por primera vez sus capacidades de encuentro y acoplamiento con versiones de prueba de uno o ambos sistemas comerciales estadounidenses de aterrizaje humano, que están siendo desarrollados por Blue Origin y SpaceX. Esta misión, cuidadosamente coreografiada, incluye una espectacular campaña de múltiples lanzamientos de los cohetes más potentes del mundo y pondrá a prueba el equipamiento integrado entre Orion y los módulos de aterrizaje, así como las interfaces de los sistemas, el software, la propulsión y las comunicaciones.

Los astronautas asignados a la tripulación son los siguientes:

- el astronauta de la NASA Randy Bresnik, comandante

- el astronauta de la ESA (Agencia Espacial Europea) Luca Parmitano, piloto

- el astronauta de la NASA Andre Douglas, especialista de misión

- el astronauta de la NASA Frank Rubio, especialista de misión

Durante el evento del martes, el astronauta de la NASA Bob Hines fue nombrado miembro suplente de la tripulación. La tripulación comenzará a entrenarse de inmediato en los sistemas de la nave espacial Orion y también colaborará en el desarrollo y las operaciones de las versiones de prueba de los módulos de aterrizaje de Blue Origin y SpaceX.

“Hoy damos otro paso audaz en el regreso de la humanidad a la Luna, basándonos en los extraordinarios cimientos sentados por los astronautas de Artemis II”, dijo el administrador de la NASA, Jared Isaacman. “Sus logros reavivaron el entusiasmo mundial por la exploración, y ahora le pasan la antorcha al equipo de Artemis III: Randy, Luca, Frank y Andre. Artemis III demostrará el poder de la innovación estadounidense y la colaboración internacional mientras ponemos a prueba operaciones complejas de encuentro y acoplamiento, y avanzamos las tecnologías que algún día nos llevarán más adentro del sistema solar. Esta misión requerirá la coordinación más impresionante de lanzamientos de cohetes de carga pesada de la historia, aprovechando el talento y las capacidades de equipos de todo el ámbito gubernamental y de la comunidad de vuelos espaciales. Los astronautas de Artemis III, junto con la ESA y nuestros socios internacionales, y las decenas de miles de las personas más brillantes y capaces de la agencia y la industria, están dando inicio a una nueva edad dorada de la exploración, impulsando las esperanzas y los sueños de la próxima generación, así como los astronautas del programa Apolo lo hicieron por tantos de nosotros”.

Esta también es la primera vez que se asigna a un astronauta de la ESA a una misión de Artemis.

“Artemis III ampliará los límites de las operaciones de naves espaciales en órbita. La asignación de Luca como piloto refleja la profundidad de la experiencia europea en los vuelos espaciales tripulados y se basa en su amplia experiencia operativa en situaciones de alta presión”, dijo Josef Aschbacher, director general de la ESA. “Al mismo tiempo, el Módulo de Servicio Europeo de la ESA volverá a aportar las capacidades fundamentales que proporcionan energía a Orion, lo que demuestra la presencia duradera de Europa en el corazón mismo del programa Artemis. La noticia que hoy llega desde Houston es un poderoso reconocimiento del papel de la ESA al hacer posible el regreso de la humanidad a la Luna, y un avance clave en nuestra colaboración con la NASA. Los europeos pueden enorgullecerse de formar parte de este apasionante viaje”.

Avances de la misión

La NASA y sus socios están avanzando en los preparativos para el vuelo de prueba. Este verano boreal, los equipos de ingeniería conectarán el módulo de la tripulación y el módulo de servicio de Orion, e integrarán el sistema de acoplamiento de la nave espacial, que volará por primera vez. Continúan las pruebas del escudo térmico, ya que cada uno de los bloques ha sido sometido a inspecciones ultrasónicas y se ha instalado en la estructura del escudo térmico.

El procesamiento del cohete también está muy avanzado. Los técnicos de SLS están integrando la sección del motor con el resto de la etapa central antes de instalar los cuatro motores RS-25 este verano boreal. Con todos los segmentos de los propulsores sólidos del cohete ya en el centro Kennedy de la NASA y el acondicionamiento del lanzador móvil avanzando según lo previsto, también se prevé que el apilamiento del cohete comience este verano. La NASA continúa con el diseño y la fabricación de un segmento espaciador que reemplazará la etapa superior en Artemis III.

Blue Origin está desarrollando una versión tripulada de su módulo de aterrizaje lunar Blue Moon, mientras que SpaceX está desarrollando una versión de módulo de aterrizaje lunar tripulado de su nave Starship. Ambas empresas están construyendo unidades de prueba para Artemis III. La NASA brinda apoyo directo a ambos proveedores de módulos de aterrizaje durante el diseño, el desarrollo, las pruebas y la evaluación, lo que incluye compartir la experiencia y las capacidades de la agencia obtenidas en misiones anteriores.

Durante el evento, la NASA ofreció actualizaciones de la agencia y de ambos socios comerciales, así como detalles sobre las operaciones previstas para Artemis III, las cuales respaldarán una mayor cadencia de misiones, aumentarán la producción e impulsarán mejoras en la cadena de suministro del programa Artemis.

La misión Artemis III se basa en el exitoso vuelo de Artemis II, que se completó en abril, y ayudará a la agencia a prepararse para enviar a los primeros astronautas, estadounidenses, a Marte.

Artemis III contempla el lanzamiento en rápida sucesión de los cohetes más potentes del mundo. El módulo de aterrizaje de exploración (pathfinder) de Blue Origin, que puede permanecer en órbita durante varias semanas, se lanzará primero y esperará a la tripulación. La NASA usará el cohete SLS para enviar a los astronautas a bordo de Orion a orbitar la Tierra, antes de un encuentro en el espacio con la unidad de prueba del módulo de aterrizaje de la empresa, con la cual Orion permanecerá acoplada durante unos dos días para llevar a cabo pruebas y demostraciones tecnológicas, incluido el ingreso al módulo de aterrizaje.

Tras completar las operaciones acoplada con Blue Origin, Orion se separará y esperará a Starship. El módulo de exploración Starship de SpaceX se lanzará y se encontrará con Orion para pasar aproximadamente un día acoplados para verificaciones y pruebas. Después de eso, Orion y su tripulación se desacoplarán y regresarán a casa, amerizando de manera segura en el océano Pacífico, donde un equipo de la Marina de Estados Unidos y la NASA recuperará a los astronautas.

En total, se prevé que la tripulación permanezca en el espacio durante unas dos semanas. La duración exacta de la misión se determinará en tiempo real en función de las operaciones de lanzamiento, encuentro y acoplamiento.

Más información sobre los miembros de la tripulación de Artemis III

Esta será la tercera misión espacial de Bresnik, quien fue lanzado a bordo del trasbordador espacial Atlantis en la misión STS-129 a la Estación Espacial Internacional en 2009. Posteriormente, viajó a la estación espacial en la nave espacial Soyuz MS-05 desde el Cosmódromo de Baikonur, en Kazajistán, y se desempeñó como ingeniero de vuelo en la Expedición 52 y como comandante de la Expedición 53 de la estación. Originario de California, se graduó en The Citadel con un título en matemáticas y fue seleccionado por la NASA en la promoción de candidatos a astronautas de 2004. Coronel retirado del Cuerpo de Marines de Estados Unidos, ha acumulado más de 7.000 horas de vuelo en 95 tipos de aeronaves y es miembro de la Sociedad de Pilotos de Pruebas Experimentales. Desde 2018, se ha desempeñado como asistente del jefe de la Oficina de Astronautas para asuntos de exploración, supervisando el desarrollo y las pruebas de la nave espacial y los sistemas que operarán durante las misiones de Artemis.

Artemis III también será el tercer vuelo espacial de Parmitano. Seleccionado por la ESA como astronauta en 2009, primero se desempeñó como ingeniero de vuelo en la primera misión de larga duración de la Agencia Espacial Italiana (ASI, por sus siglas en italiano) a la estación espacial, despegando en una nave Soyuz desde Baikonur en 2013. Regresó al laboratorio orbital en 2019 a bordo de Soyuz MS-13 para su segunda misión, durante la cual ejerció de comandante de la Expedición 61 y se convirtió en el tercer europeo, y el primer italiano, en comandar la estación. Parmitano obtuvo una licenciatura en ciencias políticas en la Universidad de Nápoles Federico II y una maestría en ingeniería de pruebas de vuelo experimentales en el Instituto Superior de la Aeronáutica y del Espacio en Toulouse, Francia. Graduado de la Academia de la Fuerza Aérea Italiana, se convirtió en piloto de pruebas en 2007 y fue ascendido a coronel en 2019. Ha acumulado más de 2.000 horas de vuelo en 40 tipos de aeronaves.

Este será el segundo viaje al espacio de Rubio, quien fue lanzado a bordo de la nave espacial Soyuz MS-22 desde Baikonur a la estación espacial el 21 de septiembre de 2022 y regresó el 27 de septiembre de 2023, batiendo el récord del vuelo espacial individual más largo realizado por un astronauta estadounidense, con 371 días en órbita. Rubio fue seleccionado por la NASA en la promoción de candidatos a astronautas de 2017. Originario de Florida, se graduó en la Academia Militar de Estados Unidos en 1998, obtuvo un doctorado en medicina en la Universidad de Servicios Uniformados de las Ciencias de la Salud en 2010 y ha servido durante más de 28 años en el Ejército de Estados Unidos como aviador, médico y astronauta.

La misión es el primer vuelo espacial de Douglas. Fue seleccionado por la NASA en la promoción de candidatos a astronautas de 2021 y anteriormente se desempeñó como miembro suplente y de la tripulación de cierre de la misión Artemis II de la agencia. Originario de Virginia, Douglas obtuvo una licenciatura en ingeniería mecánica en la Academia de la Guardia Costera de Estados Unidos y cuatro títulos de posgrado en distintas instituciones, entre ellos un doctorado en ingeniería de sistemas de la Universidad George Washington. Durante su tiempo en la Guardia Costera, llevó a cabo operaciones de búsqueda y rescate, salvamento marítimo e interdicción de drogas. Además, su trabajo en el Laboratorio de Física Aplicada de la Universidad Johns Hopkins incluyó el diseño y la prueba de vehículos autónomos multidominio, sistemas de exploración espacial y numerosas plataformas de guerra submarina.

Como miembro suplente de la tripulación, Hines se entrenará junto con Bresnik, Parmitano, Rubio y Douglas. En caso de que un miembro principal de la tripulación no pueda participar en la misión, se uniría a la tripulación de Artemis III. Hines se desempeñó anteriormente como piloto de la misión SpaceX Crew 4 de la NASA a la Estación Espacial Internacional. Seleccionado por la NASA en la promoción de candidatos a astronautas de 2017, antes de su selección se desempeñó como piloto de investigación en el Centro Espacial Johnson de la agencia. Es coronel de la Fuerza Aérea de Estados Unidos, con más de 27 años de servicio como piloto instructor, piloto de combate y piloto de pruebas.

Como parte de una edad de oro de innovación y exploración, la NASA enviará astronautas en misiones cada vez más difíciles para explorar más de la Luna con fines de descubrimiento científico y beneficios económicos, establecer una presencia humana duradera en la superficie lunar y continuar sentando las bases para las primeras misiones tripuladas a Marte.

Aprende más sobre el programa Artemis:

https://www.nasa.gov/artemis (inglés)

https://ciencia.nasa.gov/artemis (español)

-fin-

Bethany Stevens / Amber Jacobson / María José Viñas

Sede central, Washington

+1 202-358-1600

bethany.c.stevens@nasa.gov / amber.c.jacobson@nasa.gov / maria-jose.vinasgarcia@nasa.gov

Anna Schneider

Centro Espacial Johnson, Houston

281-483-5111

anna.c.schneider@nasa.gov

NASA Marches Toward Artemis III Mission in 2027, Names Crew Members

2026-06-09 16:12

Taking another step toward one of the most complex human spaceflight missions in recent history, NASA on Tuesday provided new Artemis III details and announced the four prime crew members and a backup for the test flight. The mission will undertake a series of challenging tests in Earth orbit in 2027, essential for Artemis IV, the first planned crewed mission to the lunar South Pole in 2028.

During Artemis III, the agency’s SLS (Space Launch System) rocket will launch the Orion spacecraft and its crew from NASA’s Kennedy Space Center in Florida to low Earth orbit. After Orion systems checkouts, the spacecraft will, for the first time, demonstrate rendezvous and docking capabilities with test versions from one, or both, American commercial human landing systems in development by Blue Origin and SpaceX. This highly choreographed mission includes a dramatic multi-launch campaign of the world’s most powerful rockets, testing integrated hardware between Orion and the landers, including system interfaces, software, propulsion, and communications.

Crew assignments are as follows:

- NASA astronaut Randy Bresnik, commander

- ESA (European Space Agency) astronaut Luca Parmitano, pilot

- NASA astronaut Andre Douglas, mission specialist

- NASA astronaut Frank Rubio, mission specialist

As part of Tuesday’s event, NASA astronaut Bob Hines was named as a backup crew member. The crew will begin training immediately on Orion spacecraft systems, as well as assist in the development and operations of the test versions of Blue Origin and SpaceX landers.

“Today we take another bold step in humanity’s return to the Moon, building on the extraordinary foundation laid by the Artemis II astronauts,” said NASA Administrator Jared Isaacman. “Their achievements reignited global excitement for exploration, and now they pass the torch to the Artemis III team, Randy, Luca, Frank, and Andre. Artemis III will demonstrate the power of American innovation and international partnership as we test complex rendezvous and docking operations and advance the technologies that will one day carry us deeper into the solar system. This mission will require the most awe-inspiring coordination of heavy-lift rocket launches in history, drawing on the talent and capability of teams across government and the spaceflight community. The Artemis III astronauts, alongside ESA and our international partners, and the tens of thousands of the best and brightest across the agency and industry, are ushering in a new Golden Age of exploration carrying forward the hopes and dreams of the next generation just as the Apollo astronauts did for so many of us.”

This also is the first time an ESA astronaut has been assigned an Artemis mission.

“Artemis III will push the boundaries of spacecraft operations in orbit. Luca’s assignment as pilot reflects the depth of European expertise in human spaceflight and draws on his extensive operational experience in high-pressure situations,” said Josef Aschbacher, ESA’s director general. “At the same time, ESA’s European Service Module will once again provide the critical capabilities that power Orion, demonstrating Europe’s enduring role at the very heart of the Artemis program. The news out of Houston today is a powerful recognition of ESA’s role in enabling humanity’s return to the Moon – and a key advancement in our partnership with NASA. Europeans can take pride in being part of this exciting journey.”

Mission progress

NASA and its partners are making progress preparing for the test flight.

Engineers will connect the Orion crew module and service module this summer and integrate the spacecraft’s docking system, which will fly for the first time. Heat shield testing continues with individual blocks having undergone ultra-sonic inspections and installation onto the heat shield structure.

Rocket processing also is well underway. Technicians for SLS are integrating the engine section to the rest of the core stage ahead of installing the four RS-25 engines this summer. With all solid rocket booster segments now at NASA Kennedy and mobile launcher refurbishments on track, rocket stacking also is scheduled to begin this summer. NASA continues design and fabrication of a spacer that will replace the upper stage on Artemis III.

Blue Origin is developing a crewed lunar version of the company’s Blue Moon lander, while SpaceX is developing a crewed lunar lander version of the company’s Starship, with both companies building test articles for Artemis III. NASA is supporting both lander providers hands-on throughout design, development, testing, and evaluation, including sharing agency expertise and capabilities gained from previous missions.

In addition to status updates from NASA and both commercial partners, the agency discussed details during the event about the planned operations for Artemis III, which will support an increased mission cadence, ramp up production, and drive supply chain improvements for the Artemis program.

The Artemis III mission builds on the successful Artemis II flight completed in April and will help the agency prepare to send the first astronauts, Americans, to Mars.

Artemis III includes launching the world’s most powerful rockets in short order. Blue Origin’s lander pathfinder, which is able to stay in orbit for multiple weeks, will launch first and await the crew. NASA will send the astronauts aboard Orion by SLS to orbit Earth, before rendezvousing in space with the company’s lander test article and spending about two days docked together for tests and technology demonstrations, including entering the lander.

After completing docked operations with Blue Origin, Orion will detach and await Starship. SpaceX’s Starship pathfinder will launch and meet up with Orion to spend about a day connected for checkouts and testing. After that, Orion and its crew will undock and return home, splashing safely down in the Pacific Ocean where a team from the U.S. Navy and NASA will recover the astronauts.

In total, the crew is expected to remain in space for about two weeks, with exact mission length to be determined in real-time based on launch, rendezvous, and docked operations.

Learn more about Artemis III crew members

This will be the third mission to space for Bresnik, having launched aboard space shuttle Atlantis on the STS-129 mission to the International Space Station in 2009. He later flew on the Soyuz MS-05 spacecraft from the Baikonur Cosmodrome in Kazakhstan to the space station, serving as a flight engineer for the station’s Expedition 52 and commander of Expedition 53. A California native, he graduated from The Citadel with a degree in mathematics and was selected by NASA in the 2004 astronaut candidate class. A retired U.S. Marine colonel, he has logged more than 7,000 hours in 95 types of aircraft and is a fellow in the Society of Experimental Test Pilots. Since 2018, he has served as assistant to the chief of the Astronaut Office for exploration, overseeing the development and testing of the spacecraft and systems that will operate during Artemis missions.

Artemis III also will be the third spaceflight for Parmitano. Selected by ESA as an astronaut in 2009, he first served as a flight engineer on the Italian Space Agency’s (ASI) first long-duration mission to the space station, launching on a Soyuz from Baikonur in 2013. He returned to the orbital laboratory in 2019 aboard Soyuz MS-13 for his second mission, during which he served as commander of Expedition 61, becoming the third European, and the first Italian, to command the station. Parmitano earned a bachelor’s degree in political sciences from the University of Naples Federico II and a master’s degree in experimental flight test engineering from the Institut Supérieur de l’Aéronautique et de l’Espace in Toulouse, France. A graduate of the Italian Air Force Academy, he became a test pilot in 2007 and was promoted to colonel in 2019. He has logged more than 2,000 flight hours across 40 types of aircraft.

Rubio is making his second trip to space. He launched aboard the Soyuz MS-22 spacecraft from Baikonur to the space station on Sept. 21, 2022, and returned on Sept. 27, 2023, breaking the record for the longest single-duration spaceflight by an American astronaut with 371 days in orbit. Rubio was selected by NASA in the 2017 astronaut candidate class. A Florida native, he graduated from the U.S. Military Academy in 1998, earned a doctor of medicine from the Uniformed Services University of the Health Sciences in 2010, and has served for more than 28 years in the U.S. Army as an aviator, a physician, and an astronaut.

The mission is Douglas’ first spaceflight. Selected by NASA in the 2021 astronaut candidate class, he previously served as a backup and closeout crew member for the agency’s Artemis II mission. A Virginia native, Douglas earned a bachelor’s degree in mechanical engineering from the U.S. Coast Guard Academy and four postgraduate degrees from various institutions, including a doctorate in systems engineering from George Washington University. During his time in the Coast Guard, he conducted search and rescue, maritime salvage, and drug interdiction operations. Additionally, his time at the Johns Hopkins University Applied Physics Laboratory involved designing and testing multidomain autonomous vehicles, space exploration systems, and numerous undersea warfare platforms.

Serving as a backup crew member, Hines will train alongside Bresnik, Parmitano, Rubio, and Douglas. Should a primary crew member be unable to participate in the mission, he would join the Artemis III crew. Hines previously served as pilot of NASA’s SpaceX Crew-4 mission to the International Space Station. Selected by NASA in the 2017 astronaut candidate class, he served as a research pilot at the agency’s Johnson Space Center prior to his selection. He is a colonel in the U.S. Air Force with more than 27 years of service as an instructor pilot, fighter pilot, and test pilot.

As part of the Golden Age of innovation and exploration, NASA will send Artemis astronauts on increasingly difficult missions to explore more of the Moon for scientific discovery, economic benefits, establish an enduring human presence on the lunar surface, and to build on our foundation for the first crewed missions to Mars.

Learn more about NASA’s Artemis program:

-end-

Bethany Stevens / Amber Jacobson

Headquarters, Washington

202-358-1600

bethany.c.stevens@nasa.gov / amber.c.jacobson@nasa.gov

Anna Schneider

Johnson Space Center, Houston

281-483-5111

anna.c.schneider@nasa.gov

TechCrunch - Latest

Waymo says it built a better benchmark for comparing robotaxis to humans

2026-06-10 09:00

Meta signs first AI data center deal in India with Reliance

2026-06-10 07:05

Top Lucid Motors executive departs amid new CEO’s leadership shakeup

2026-06-10 03:35

Google just fired a warning shot in the AI subscription price wars

2026-06-10 00:26

How Justin Ernest invested nearly $500M into hot startups without a traditional VC fund

2026-06-09 23:17